We are often overwhelmed by the “newness” of Artificial Intelligence. We treat Large Language Models (LLMs) as sudden apparitions—miracles of silicon that appeared overnight.

However, for those of us in enterprise design and systems thinking, these tools are simply the latest iteration of a much older, more profound discipline: Cybernetics.

At Teracore, we believe that to master modern automation, one must first understand the “science of the steersman.” Whether you are a developer, a marketer, or a business leader, shifting your perspective from linear workflows to circular feedback loops is the key to navigating the complexity of 2026 and beyond.

This article synthesises the core insights from a recent deep-dive session produced by our partners at Xponent. To truly grasp the visual demonstrations of these concepts—from 1940s robotic tortoises to modern generative UI—we highly recommend watching the full video here.

1. The Cybernetic Shift: From Structure to Behaviour

The word Cybernetics originates from the Greek kubernetes, meaning “steersman,” “governor,” or “captain.” While the term has been largely eclipsed by “AI” or “Data Science” in modern parlance, the underlying mechanisms remain the same.

The Death of the Newtonian Clockwork

Historically, our intellectual model of the world was Newtonian. We viewed systems—whether an engine or an organization—as a collection of parts. To understand them, you took them apart. This was a linear model: Cause A leads to Effect B in a straight line.

Cybernetics, formalised by mathematician Norbert Wiener during World War II, flipped this script. While trying to solve the problem of aiming anti-aircraft guns at moving targets, Wiener realized that deterministic physics wasn’t enough. The enemy pilot reacts to the gunfire, forcing the gunner to readjust. This is not a line; it is a circle.

The Fundamental Shift:

- Old Question: What are the parts of the system?

- Cybernetic Question: What is the purpose of the system, and how does it behave to achieve it?

Entropy and Information (Negentropy)

In physics, the Second Law of Thermodynamics states that entropy (disorder) always increases in a closed system. Wiener conceptualised Information as Negative Entropy (Negentropy). Information is the measure of order. A cybernetic system—be it a single cell, a human, or a machine—is a local pocket of order that maintains stability against a chaotic environment through feedback.

How Cybernetic Feedback Loops Work

Data / Signal enters system

System interprets input

Action / Result produced

Output re-enters system

Negative Feedback

Stabilises the system by correcting deviations and maintaining balance.

Positive Feedback

Amplifies changes, accelerating growth or decline within the system.

2. The Mechanics of Feedback Loops

To lead a technically sophisticated team, you must distinguish between the two types of feedback loops that govern all systems.

Negative Feedback: The Stabiliser

Negative feedback is about balance and homeostasis. It corrects a system to return it to a “normal” state.

- Biological Example: Your pupils dilating or shrinking based on light levels.

- Mechanical Example: A thermostat turning an oven element off once a target temperature is reached.

- Business Application: A project manager identifying a budget deviation and reallocating resources to return to the original scope.

Positive Feedback: The Amplifier

Positive feedback drives a system further away from its starting point, creating a runaway cycle. It is not “positive” in a moral sense; it is additive.

- The Audio Screech: In a video call, if sound from a speaker enters the microphone, it amplifies until it becomes a deafening whistle.

- The Internet Troll: Replying to a troll with anger provides the “attention stimulus” they seek. The behaviour isn’t punished; it is reinforced, leading to more trolling.

- Business Application: A viral marketing campaign where every share increases the probability of more shares, leading to exponential growth.

Strategic Insight: Most business failures occur because a leader misidentifies a positive feedback loop (runaway growth/decline) as a negative one (manageable fluctuation).

3. A Brief History of the Future: The Ratio Club

In post-war Britain, a group of eccentric geniuses known as The Ratio Club met in hospital basements to discuss the brain. Members included Alan Turing (the father of digital computing) and W. Ross Ashby (a psychiatrist).

They debated a fundamental question: Is the brain a digital computer (Turing’s view) or an analog balancing machine (Ashby’s view)? This cross-functional synthesis of biology, engineering, and psychology laid the groundwork for modern AI.

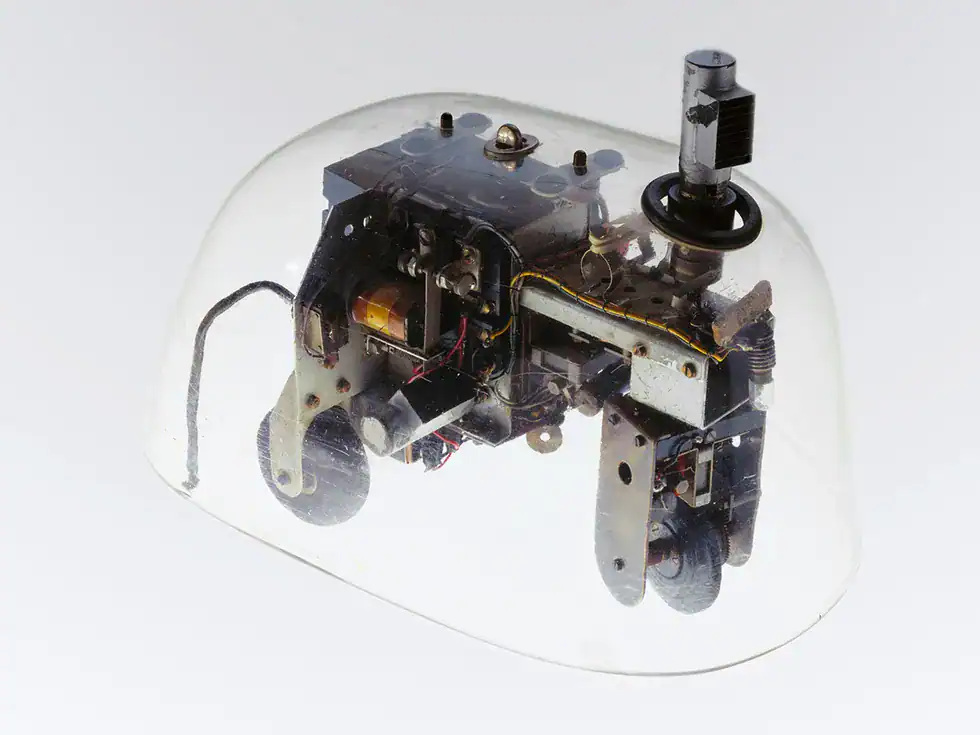

Emerging Intelligence: The Tortoise and the Homeostat

We often think intelligence requires massive datasets. Early cybernetic machines proved otherwise:

- Machina Speculatrix (1948): Built by Gray Walter, these “robotic tortoises” had no CPU or code—just two vacuum tubes. Yet, by interacting with light and obstacles through simple feedback loops, they exhibited “lifelike” behaviour, including a rhythmic “mating dance” when they encountered each other.

- The Homeostat: Ashby’s machine could survive “trauma.” If wires were swapped or polarity flipped, the machine would randomly cycle through internal configurations until it found a new state of stability. This was the first hardware implementation of Reinforcement Learning.

Project Cybersyn: The Socialist Internet

In the early 1970s, Chile attempted to run its entire national economy via Project Cybersyn. They connected 500 factories via telex machines to a central “Starship Enterprise” style control room.

- The Goal: A “Liberty Machine.” The system alerted workers to production drops before the government, aiming for autonomy rather than tyranny.

- The Lesson: It proved that real-time data could manage complex systems without heavy bureaucracy, though the project was tragically cut short by the 1973 coup.

4. Cybernetics in the Age of Generative AI

If you use ChatGPT or Claude, you are participating in a second-order cybernetic loop.

How LLMs Actually Work

An LLM doesn’t “know” things; it calculates the probability of the next “token” (chunk of text).

- Training: The model finds mathematical correlations between words.

- The Loop: It outputs a token, then feeds that token back into itself as a new input to guess the next one. This is a circular causality loop occurring thousands of times per second.

RLHF: The Steersman

Reinforcement Learning from Human Feedback (RLHF) is the cybernetic governor that makes AI useful.

- The AI generates high-entropy (chaotic/random) output.

- Humans act as the “captain,” scoring the outputs.

- The system updates its weights to probabilistically favour higher-scoring (more useful) outputs in the future.

Reinforcement Learning from Human Feedback (RLHF)

How modern AI systems learn to produce better, more aligned outputs

1. Model Output

AI generates a response based on input data.

2. Human Feedback

Humans evaluate and rank outputs for quality and accuracy.

3. Reward Model

Feedback is converted into a scoring system.

4. Optimisation

Model updates to improve future responses.

Why RLHF Matters

- Aligns AI outputs with human expectations

- Improves accuracy, tone, and usefulness

- Reduces harmful or irrelevant responses

- Enables more natural, context-aware interactions

Strength

Produces highly aligned, user-friendly AI systems.

Risk

Over-optimisation may reduce diversity or introduce bias.

The Risk of “AI Inbreeding” (Model Collapse)

Cybernetic systems need a connection to reality to maintain “negating entropy.” Research shows that if you train an AI on synthetic data (content created by another AI), it eventually produces nonsense. This is Model Collapse. Humans generate “negative entropy” because we interact with the physical world. For AI to stay smart, it requires a constant “grass-touching” connection to human creativity. Without us, the system becomes a closed loop and descends into disorder.

5. Dystopian Feedback: Governance and the Dead Internet

As leaders, we must be wary of when cybernetic control systems turn into “control machines” rather than “liberty machines.”

- Algorithmic Governance: Tools like Google’s Perspective AI score comments for toxicity. While useful, they act as “variety reducers.” They suppress signals that deviate from the norm, potentially flagging slang or unconventional thought as “toxic.”

- Algorithmic Management: Consider at Uber. The app sets the goal, the passenger provides the feedback (the star rating), and the driver is simply a component in the loop. If the rating drops, the component is “deactivated.” It is the inverse of Project Cybersyn—data used for imposition rather than autonomy.

- Dead Internet Theory: We are entering a phase where bots talk to bots to game algorithms. This is a runaway positive feedback loop where human interaction is squeezed out by AI-generated “Shrimp Jesus” images or fake war footage designed solely for engagement metrics.

6. Practical Takeaways: Your Everyday Cybernetic Toolkit

How do you apply this? By building your own personal feedback loops to reduce cognitive load and eliminate repetitive tasks.

The RCTF Framework for Prompt Engineering

To get the most out of AI, move beyond simple questions. Use the RCTF framework:

- Role: Who is the AI? (e.g., “You are a senior Business Analyst at a top-tier firm.”)

- Context: What is the background? (e.g., “We are reviewing a project brief that has seen 20% scope creep.”)

- Task: What is the specific function? (e.g., “Identify the three most critical blind spots in this document.”)

- Format: How should it look? (e.g., “Output as a Markdown table with ‘Risk’ and ‘Mitigation’ columns.”)

The RCTF Framework for Prompt Engineering

A simple structure for consistently high-quality AI outputs

R — Role

Define who the AI is.

e.g. “You are a senior marketing strategist”

C — Context

Provide background and constraints.

Audience, goals, tone, environment

T — Task

Clearly define what must be done.

Be specific and outcome-focused

F — Format

Define how the output should look.

Structure, length, layout, style

Why RCTF Works

- Reduces ambiguity in AI instructions

- Improves consistency and output quality

- Aligns AI responses with business goals

- Transforms prompting into a repeatable system

Strategic Automation Loops

- Google Alerts: Set up “Negative Entropy” harvesters for your industry or personal brand.

- IFTTT or Zapier: Create simple conditional chains (e.g., “If I am tagged in a photo on LinkedIn, save it to my Portfolio Drive”).

- Contextual Linking: Use AI to analyze your top-performing content and generate “infinite variant loops”—A/B testing new headlines based on the psychological triggers that worked in the past.

Practical Automation Workflow

Google Alert / Event

API / IFTTT / Zapier

LLM + Prompt Logic

Email / Dashboard / Action

How It Works

- Automated trigger detects new information or event

- Workflow tool routes data between systems

- LLM processes and transforms the data intelligently

- Final output delivers actionable insight or executes a task

Conclusion: The Expert in the Loop

Cybernetics teaches us that we cannot understand a system by looking at its parts; we must watch it run. As we move into an era of ubiquitous AI, the most successful professionals will not be those who “out-code” the machine, but those who act as the Steersman.

By understanding feedback loops, entropy, and the difference between control and liberty, you can build systems that don’t just automate tasks, but elevate your strategic output.

For more insights on enterprise design and high-performance hosting, connect with our team:

FAQ: Understanding Cybernetics and AI

Q: Is Cybernetics just another word for AI?

A: Not quite. Cybernetics is the overarching study of control and communication in systems. AI is a specific, modern application of cybernetic principles (specifically feedback loops and pattern recognition) using digital computing.

Q: How can a non-technical leader apply “Negative Feedback” to their team?

A: Establish clear KPIs (the “set point”) and regular check-ins (the “sensor”). When the team’s output deviates from the KPI, the “feedback” should trigger a correction—like a training session or a resource shift—to bring the team back to the balanced state.

Q: What is the biggest threat of “Model Collapse” for businesses?

A: If a company relies solely on AI to write its marketing, SEO, and internal reports, it will eventually lose its “brand voice” and strategic edge. Because the AI is just remixing its own previous outputs, the content becomes generic and detached from the evolving market reality.

Q: Can cybernetics help with emotional intelligence at work?

A: Yes. Consider the “Client Email De-escalator” prompt mentioned in the talk. By using AI to strip the “emotional noise” (the positive feedback loop of anger) from an email, you can address the “technical problem” (the negative feedback loop of correction) with a calm, professional tone.

Q: What is “Second-Order Cybernetics”?

A: First-order cybernetics is observing a system from the outside (like a scientist looking at a thermostat). Second-order cybernetics is when the observer is part of the system. In modern business, you aren’t just using AI; your interaction with the AI changes how the AI behaves, which in turn changes your strategy. You are inside the loop.